Congressional Democrats in the Joint Economic Committee released a report this week which points to more than $20.9 billion in consumer losses that stemmed from identity theft emerging in four major breaches of data broker companies. US senator Maggie Hassan launched the investigation in August after the investigation by The Markup and CalMatterspublished by WIRED, found that some data brokers hiding tools to opt out from Google and other search engines.

The US Department of Justice’s recent release of 3 million documents related to convicted sex offender Jeffrey Epstein included grand jury subpoenas to Google that sheds light on how federal investigators interact with technology companies and how they respond to government requests for information.

Mexican drug cartel CJNG may survive the killing of its longtime leader Nemesio “El Mencho” Oseguera Cervantes thanks in part to its fruitful use of technologies such as drones, social media, and AI. Meanwhile, the Mexican Navy announced on Thursday that it captured a semi-submersible vessel carrying nearly 4 tons of cocaine as part of a recent initiative to combat drug trafficking in the Pacific Ocean. The effort comes as the US launches its own so-called campaign against maritime trafficking through a series of deadly attacks on ships in the Caribbean.

Meanwhile, as AI assistant agents like OpenClaw explode in popularity—and sow chaos across the web—a new open source project called IronCurtain uses a unique design to secure and prevent agent AI before it goes rogue.

And many more. Each week, we round up the security and privacy news we don’t quite understand. Click on the headlines to read the full stories. And stay safe out there.

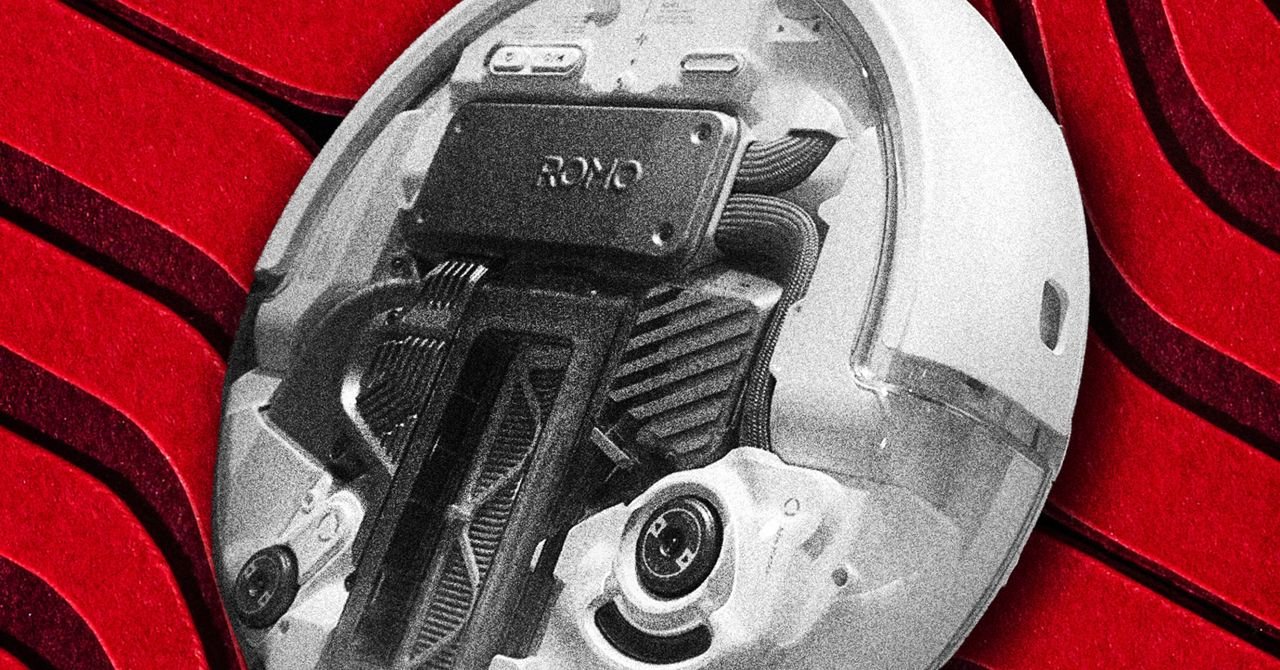

Putting an autonomous internet-enabled robot out of your home should give anyone pause. If that robot is a roving vacuum cleaner with a camera and microphone that can be hijacked from anywhere in the world with nothing more than its serial number, it becomes a real privacy horror story.

One such robovac owner, Sammy Azdoufal, discovered an unreasonable security vulnerability while testing an experiment piloting his DJI Romo robot vacuum cleaner with a PS5 controller. He found that he could now control 6,700 of the robots in 24 countries around the world, with full access to the floor plans they created in their owners’ homes and their video and audio feeds. When The Verge contacted Azdoufal, he immediately gained access to a Romo owned by a tech news outlet employee simply by knowing its 14-digit serial number. DJI has now fixed the vulnerability in response to Azdoufal essentially live-tweeting his findings. But the story nonetheless raises serious questions about the security of other audio- or video-enabled internet-of-things gadgets—not to mention those that can roam freely around your home.

While the Homeland Department has been empowered under the Trump administration in its mission to deport millions of immigrants, the organization within DHS that serves as the United States’ primary cyber defender, the Cybersecurity and Infrastructure Security Agency, has been neglected. Now its acting director, Madhu Gottumukkala, has been replaced as CISA seeks to find a new footing.

Even before that news, this week’s CyberScoop reported of the crises that have plagued the agency throughout the first year since Trump’s inauguration: A third of the staff has been laid off and entire divisions of the agency have been closed. Nominations for a permanent director are blocked by Congress. Its capabilities have withered, and organizations looking to CISA for help and partnership are looking elsewhere. Gottumukkala has suffered his own more personal scandals such as the firing of security personnel after he failed a polygraph test and sharing sensitive contracts with ChatGPT. Now Nick Andersen, who served as CISA’s executive director for cybersecurity, will replace Gottumukkala at the embattled agency.

A researcher at King’s College London pitted three popular major language models against each other in simulated war game scenarios and found that, 95 percent of the time, at least one of the models chose to deploy tactical nuclear weapons. The researcher too foundwhen one AI model deployed a tactical nuclear weapon, its AI opponent deescalated only a fourth time. None of the companies behind the three models—OpenAI, Google, and Anthropic—responded to New Scientist’s request for comment.

The role of AI in warfighting has become a factor this week. Anthropic and the War Department are embroiled in a contract dispute over whether Anthropic’s AI models can be used to operate fully autonomous weapons and monitor the bulk of the home. Dario Amodei, Anthropic’s CEO, wrote in a statement that these types of use cases “damage, rather than defend, democratic values.” Instead, President Donald Trump has threatened to ban the use of Anthropic productsincluding its Claude chatbot, within the US government. Meanwhile, hundreds of Google and OpenAI employees have signed a open letter asked their bosses to “set aside their differences and unite in continuing to refuse the current demands of the War Department for permission to use our models for domestic mass surveillance and autonomous killing of people without human supervision.”

A new app for Android phones called Nearby Glasses allows users to scan smart glasses around you, revealing the presence of wearable gadgets, which are sometimes indistinguishable from normal glasses and allowing wearers to record people they don’t know. The app scans for unique Bluetooth signatures emitted by the glasses, and sends users a notification when it detects a nearby source.

The developer told 404 Media that he was inspired to create the app after reading about several incidents involving smart glasses. Over the summer, 404 Media reported that a Customs and Border Protection agent Put a pair during an immigration raid, and this fall the outlet also reported that men were using smart glasses in film workers massage parlorthey seem to have no knowledge or consent. In February, the New York Times reported that a smart-glasses developer, Meta, has plans to integrate facial recognition into its glasses, prompting new concerns among privacy experts.