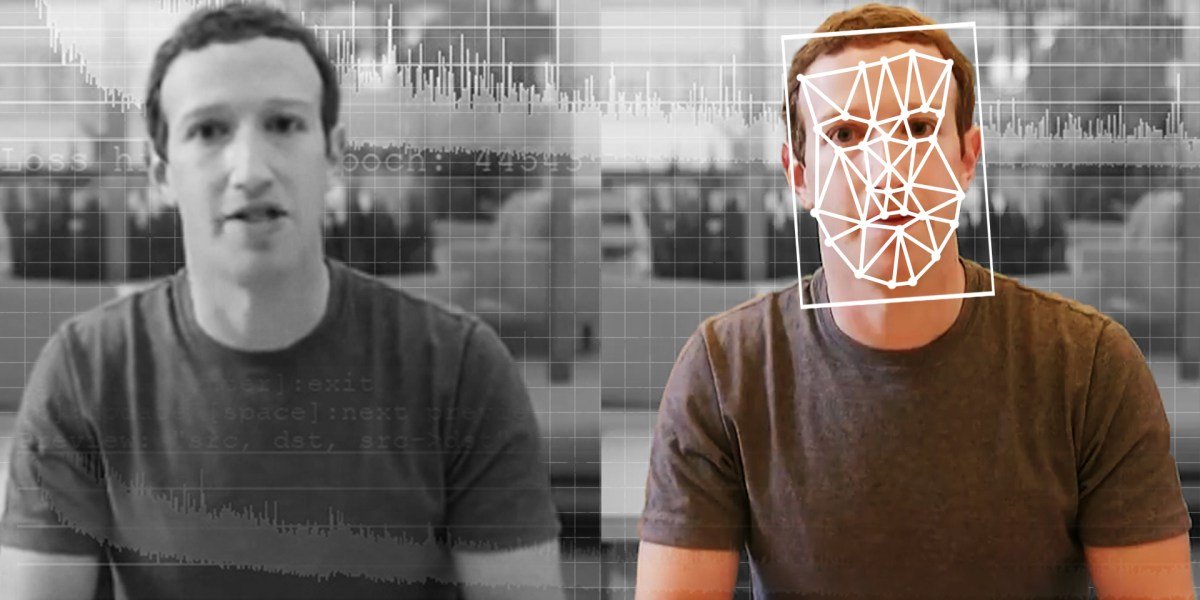

Deepfake fraud down $1.1 billion from US corporate accounts by 2025, tripling from $360 million last year. In the middle of last year, documented incidents have quadrupled the 2024 total. And most corporate communications and brand teams remain dangerously unprepared.

Executives today face synthetic threats from two directions: their likenesses cloned to allow fraudulent transfers or inflicting reputational damage, and the voices created by AI impersonating government officials, board members, and business partners used to manipulate them.

In 2019, an unnamed British energy executive received a phone call from someone they believed to be their chief executive. The accent and subtle consonant changes are right, even the cadence is familiar. Only after wiring $243,000 did they realize the voice on the other end of the phone was synthetic. Last year, Scammers have cloned Italy’s defense minister and called the country’s business elite. At least one sent almost €1 million before the scam was discovered.

But these brands are lucky. Imagine the impact if a synthetic video of your CEO making inappropriate comments, announcing a botched merger, or criticizing a regulator quickly spreads on social media before your team can respond. Deepfakes are no longer a cybersecurity curiosity. They now represent a security threat, a financial risk, and a significant reputational risk.

The communication gap is wider than the security gap

Much of the coverage of deep counterfeiting threats centers on detection algorithms and verification protocols. Cybersecurity vendors offer solutions, and IT departments update policies. However, few have addressed a critical question for CMOs and CCOs: What happens to your brand if your CEO’s likeness is used for fraud, disinformation, or character attacks?

I have spent two decades advising executives through reputational crises, including regulatory investigations and media campaigns. There are established playbooks for these situations. However, there is no established protocol for incidents such as a synthetic likeness of a CEO that allows a fraudulent takeover or a fabricated video of a founder that goes viral.

Executive visibility now cuts both ways

Every social media post, keynote address, podcast appearance, and earnings call involving your CEO provides potential training data for attackers. The visibility that builds executive brands and humanizes leadership also supplies the voice samples and facial mapping needed for synthetic media.

Not all attacks succeed. Last year, scammers targeted the CEO of a global advertising company. They created a fake WhatsApp account using his photo, displaying a Microsoft Teams call with AI-cloned voice trained the YouTube footage, and asked a senior executive to finance a new venture. The employee refused and the company lost nothing, but the sophistication of the test revealed how far the technology had come.

The number of deepfakes increases from 500,000 in 2023 to more eight million by 2025. Voice cloning fraud is on the rise 680 percent in one year. Expect losses from AI-enabled fraud to reach $40 billion by 2027. However, Only 32 percent of corporate executives believe their organizations are prepared to handle a major incident.

Three questions must be answered in every communication group today

First, do you have a disclosure protocol for synthetic media attacks? If an AI-created replica of your CEO is used for fraud or disinformation, who does it communicate with, when, and through which channels?

Second, are you conducting a deepfake tabletop exercise? Crisis simulations should now include scenarios where an executive’s likeness is used for internal fraud, external disinformation, or both.

Third, are you coordinating the response sequence with legal, cybersecurity, and investor relations? A deep counterfeiting crisis is a fraud event, a potential disclosure obligation, and a brand emergency all at once. Silent answers fail.

Act before the attack

The companies that will face this time are establishing crisis protocols now, before seeing the faces of their executives in videos they did not record, saying things they did not say, allowing transactions they did not approve. Being like your CEO is a brand asset. It is also an attack vector.

Communications and brand teams that treat deepfakes as someone else’s problem—a cybersecurity issue, an IT concern, a financial fraud matter—will find themselves drafting apologies instead of strategies.

The opinions expressed in Fortune.com commentary pieces are solely the views of their authors and do not necessarily reflect the opinions and beliefs of luck.